SEO is the technique of improving website visibility on the search engine results for the audience. On-page, Off-page, and Technical are the three most prominent types of SEO. In this article, we are going to discuss the technical SEO aspects of your website. Technical SEO will help build up a good foundation for your website so you can apply other SEO techniques without any hiccups. This 2022 technical SEO checklist covers the must-do technical SEO practices so that you can thrive on your goal.

What Is Technical SEO?

Technical SEO is concerned with optimizing the back-end of a website. Its main goal is to make the structure of a website fundamentally strong. So that search engine Robots can easily crawl and index the website.

Technical SEO improves the technical section of the website to increase visibility on SERP so that audience can easily find it.

Importance of Technical SEO Checklist

Technical SEO is very much essential. The whole SEO system could fail if you do not take technical SEO seriously. Focusing only on On-page SEO and Off-page SEO will not rank your website higher in the search engine. Only good content will not help you. Your website needs to be fundamentally correct.

Optimized, error-free, secure, speedy, and fundamentally strong website is the main target of technical SEO. If you can make it happen, then you can concentrate on other types of SEO.

For a new website, search engines need to find the website first. Then the search engine will crawl and index the website, its web pages, and posts. And that’s not all. Then you have to develop the website to be optimized, secure, mobile-friendly, duplicate-free, and speedy.

Having a technical SEO checklist will help you to build your website as per the regulation of search engines. That’s why we are going to start with Technical SEO first rather than On-page or Off-page SEO.

How to Do Technical SEO?

Nobody wants to browse a slow or laggy website. We have to make sure that Performance, Crawlability, and Indexation are at point while doing Technical SEO. Our main goal is to create a website fast, mobile-optimized, easily accessible, secure, error code-free, crawlable & indexable with a search engine. To do all this, we have to make sure that we are fulfilling the technical SEO checklist.

Structure of the Website

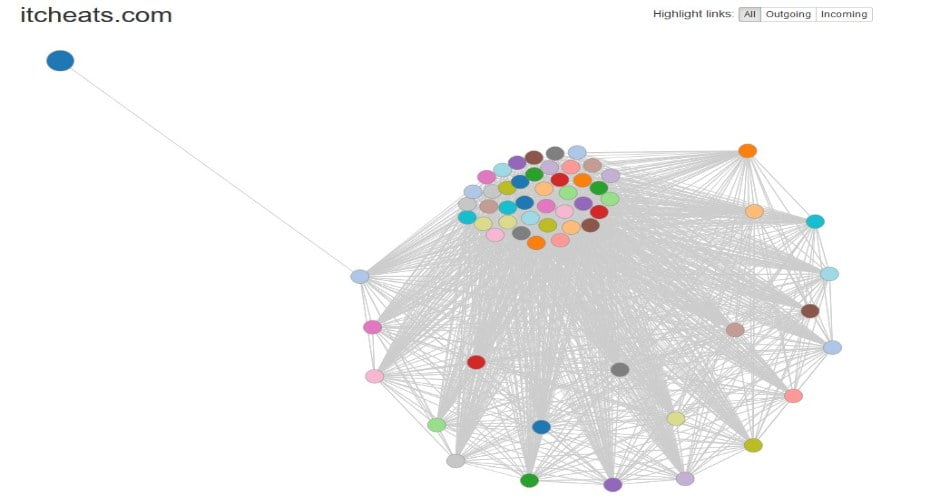

The messy design structure of a website may cause crawling and indexation problems. URL structure, sitemap, robots.txt can be affected if you do not organize your website correctly. The website structure should be flat. Pages of the website should be a few links away. A flat structure helps search engines to crawl website pages effortlessly. We can get site structure by using Ahrefs “Site Audit”. You can get visual site structure by using Visual Site Mapper.

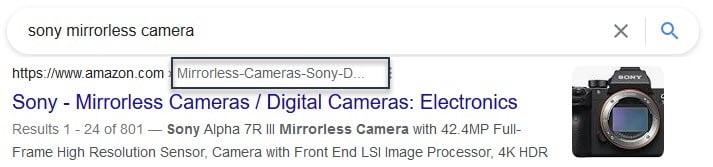

URL Structure

URL structure is related to user experience and rankings. We should create URLs, which are easy to understand both by humans and search engines. URL should provide an idea of what is on the page or post. If users can identify what they will find by clicking the URL, it will enhance the user experience. URL has a minor ranking factor. Simple, accurate, and relevant URLs have an impact on search engines. Example of good URL structure:

Example of Good URL Structure

Submitting Website to Search Engine for Indexing

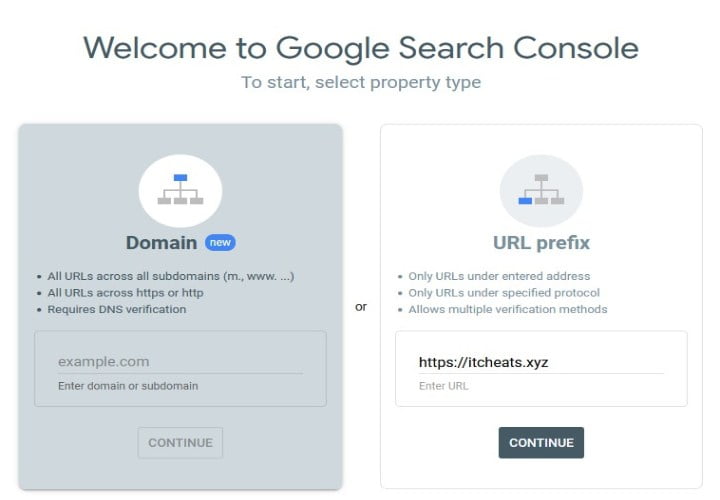

One of the crucial aspects of Technical SEO is indexing. For that, we need to submit the website to the search engine. Usually, search engine bots crawl and index your website without doing anything. However, you can make things easier for search engines. What you can do is, submit your website to search engine webmaster tools. Google, Bing, Yandex, Baidu, and others have their webmaster tools. For Google, it’s called Google Search Console and for Bing, it is Bing Webmaster Tools. The process of submitting a website to a search engine is mostly the same. Here we are going to show the process of submitting a website on Google.

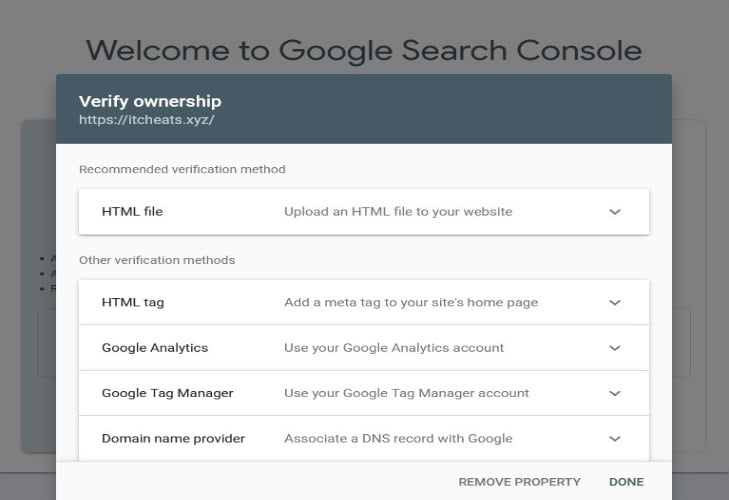

First, we need to go to Google Search Console. Then write down the website URL on the URL prefix section and click continue. A verification process will start and Google will give us the option to verify the ownership of the website.

Google search console provides five types of methods to verify ownership.

- HTML file

- HTML tag

- Google Analytics

- Google Tag Manager

- Domain name provider

You can choose your preferred method but we recommended the HTML tag method. For this method, you need to copy the meta tag and paste it on the site’s homepage. The tag should be in the <head> section, before the first <body> section.

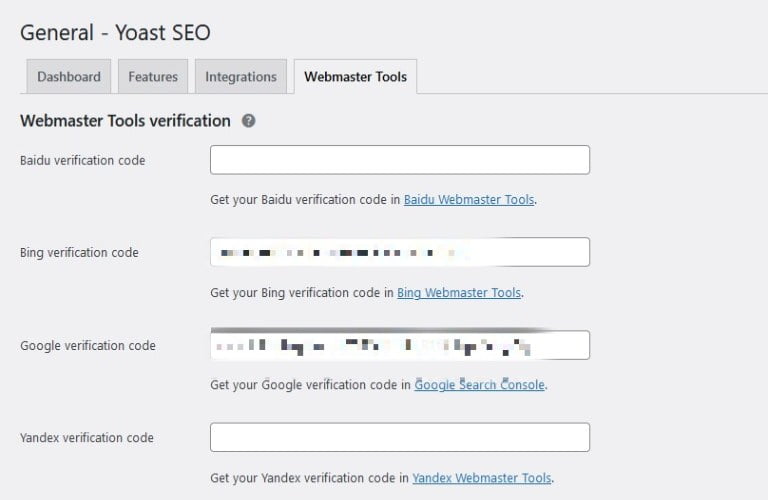

For WordPress, you need a plugin called Yoast. You can paste the meta code in Yoast’s Webmaster tools section and verify.

Google Search Console Verification using Yoast

XML Sitemap

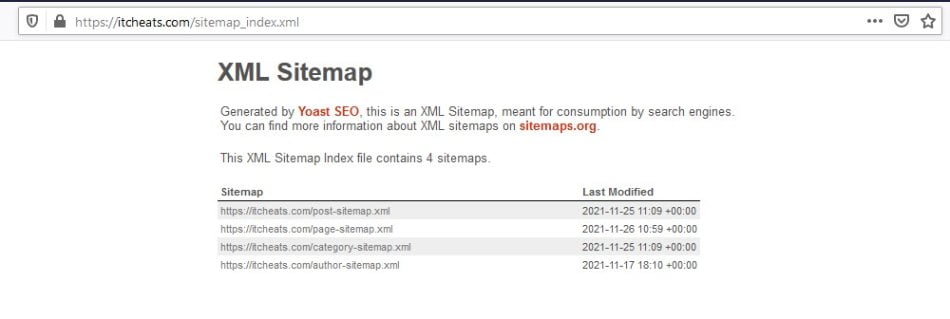

In simple words, a sitemap means, map of a website or a list of URLs. It’s a way for search engines to find the contents of a website and crawl them. Sitemap helps search engines to understand website structure. XML sitemaps contain a list of URLs and metadata.

Search engine crawlers usually discover and index pages from internal and external links. A submitted sitemap can help crawl and index website content. Submitting a sitemap to a search engine does not guarantee to index of website content.

We can generate an XML sitemap by using Screaming Frog SEO Spider. The free version allows up to 500 URLs for crawling and generating an XML sitemap. For more than 500 URLs, we will need the paid version.

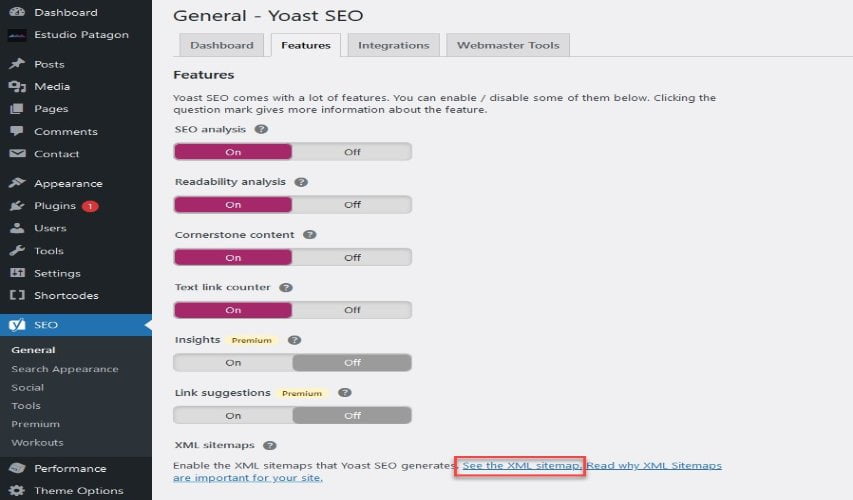

In the case of CMS, it’s most likely have already made a sitemap for search engines. For WordPress, you can use plugins such as Yoast SEO for generating XML sitemap.

XML sitemap using Yoast SEO plugin

Robots Exclusion Standard or Robots.txt

Robots.txt is a text file located in the root or main directory of a website. It contains a set of instructions for search engine crawlers or bots. It specifies which pages or sections are to crawl and which are not. Search engines (Google, Bing, and Yahoo) usually follow this instruction set. However, they are not bound to do so. A robots.txt file is mainly used for avoiding overloading a website by the crawler or bots.

Most of the time indexing issues are related to the Robots.txt file. It may be due to the disallowed sections of the Robots.txt file.

The Basic Syntax of Robots.txt File

| User-agent: * | * means all crawlers, we can also specify search engine crawlers. Using “Googlebot” instead of “*” means these instructions are only for search engine google. |

| Disallow: /photos | Disallowed content |

| Allow: /photos/ABC/ | Allowed content |

| Sitemap: | To point web crawlers to the XML sitemap |

There are other directives available for the robots.txt file. But we are not going to describe those here.

Example of Robots.txt

Limitations of Robots.txt

- Not supported by all search engine bots. Different web crawlers may interpret the directives differently.

- Disallowed content can still be indexed. A link from other sites can do this.

How to Create Robots.txt

You can create a robots.txt file using cPanel. Go to the hosting provider’s cPanel. Find file manager and go to the root directory of the website. You can either create or edit the robots.txt file there.

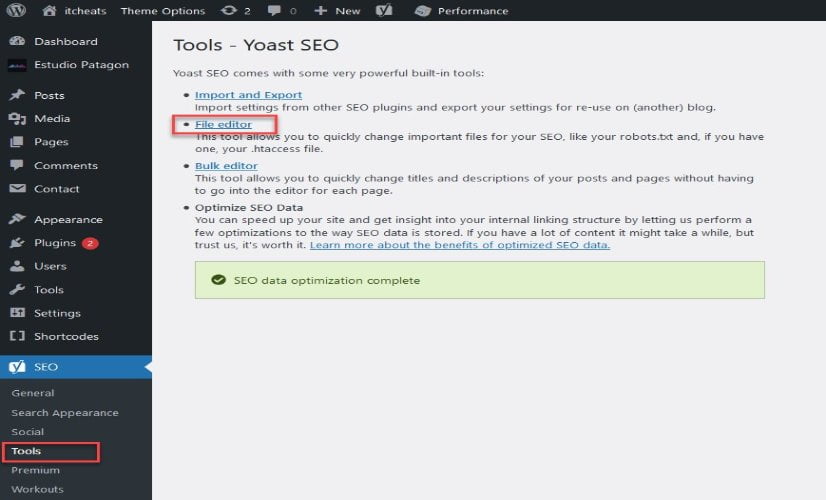

For WordPress, you will need a plugin called Yoast SEO. Now go to the dashboard of Yoast and then to Tools and File Editor. You can now view and edit the robots.txt file.

Yoast Robots.txt file editor

Website Security

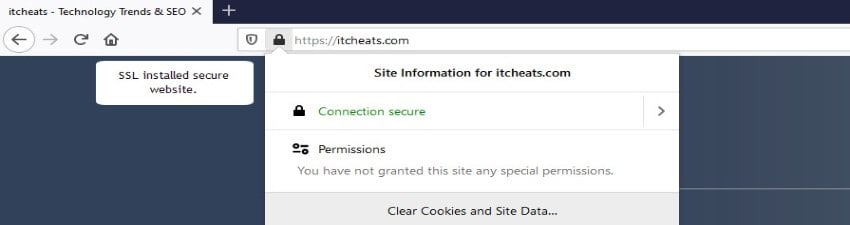

Website security is a factor in SEO ranking. A website with security measurements will be trustworthy to search engines as well as to visitors. Any website’s ultimate goal is to get more audience. It’s not just relevant content a user wants on a website. They want to browse safely without thinking much about security. The audience wants to interact on the web without the fear of data breaches. Search engines also value websites with security protocols. If you want your website to be secure, you need to switch to HTTPS. You can do that by installing an SSL certificate.

You can get an SSL certificate from hosting providers. From Let’s Encrypt and Cloudflare you can get a free SSL certificate.

A website without an SSL certificate

A website with an SSL certificate

Hypertext Transfer Protocol Response Status Codes

These codes are returned by the webservers when a user wants to view a particular page. Some common codes are:

| Status code 200 | It is a success code, which means the page loaded successfully. |

| Status code 404 | Page not found or no longer available. |

| Status code 500 | Server error. Something went wrong while trying to return a page. These errors are temporary and resolve themselves. |

| Status code 503 | Service is unavailable. Server down for maintenance or overloading. |

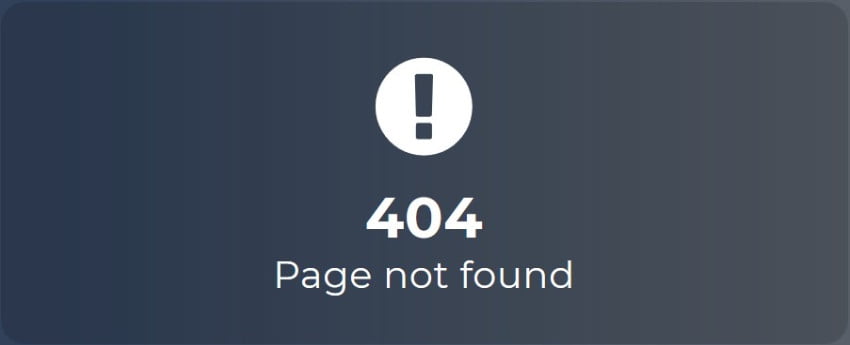

404 Pages

A 404 error response means a page does not exist or is absent for a URL. If a website returns a success code instead of a 404 for a non-existent page, it is not a good practice for SEO. Success status code may mislead search engines to index pages that are non-existent and list those in the search result. Search engines could try to crawl those non-existent URLs and doing so waste resources.

404 error code – Page not found

There is a misconception all 404 error pages are bad for SEO. If your website has a lot of 404 error pages, you need to reduce that number. However, if you have 404 pages that contain a significant number of backlinks or received a high amount of traffic, it’s a good idea to preserve the authority of the pages.

If a customer lands on a 404-error page of a website, it’s a good idea to have a friendly interface and good navigational system to make the visitors stay on the website and visit other sections of the website.

To find 404 error pages, we can use Google Search Console or we can also crawl the website with a tool called Screaming Frog.

Redirects 101

Redirects change the direction of visitors from one URL to another. There are several types of redirects, such as permanent redirects, temporary redirects. 301 status code is a permanent type redirect. 302 status code is a temporary type redirect. Mostly we need to use permanent redirect as it tells the search engines that the page is not available and redirecting to a permanent substitute. This can help rank the newer page by transferring the authority of the old page. Temporary redirects tell search engines that it’s for a limited time and no authority should be transferred.

It is best practice to redirect the old pages to a page that best matches the content of the old page. Redirecting old pages to home pages will create a poor user experience. Redirecting to home pages is advised only if there are no better pages to direct.

Mobile-Optimized

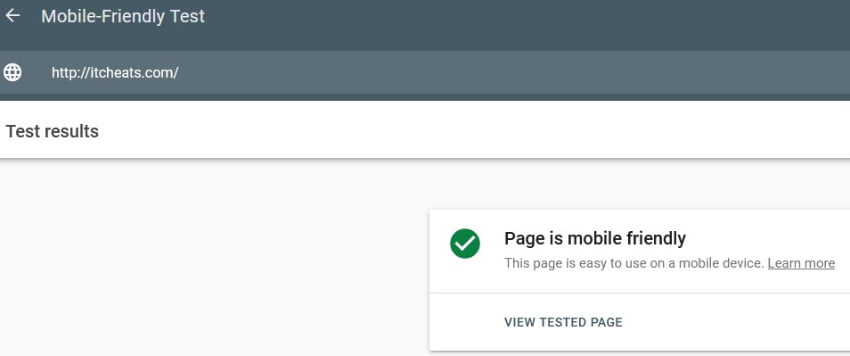

Responsive website design has tremendous value in SEO. Google and other search engines prefer mobile-friendly websites. Users are now using mobile devices more to browse the internet. If a website is not mobile-friendly, it loses its audience.

While developing a website, we need to make sure that the coding is mobile responsive. Most CMS themes are now mobile-friendly. CMS like WordPress has thousands of mobile-friendly themes to develop your website.

We can test mobile-friendly design by using Google’s Mobile-Friendly Test. We can also check the Mobile Usability report from the google search console.

Google’s Mobile-Friendly Test tool can identify the following errors:

-

- Plugin Compatibility

- Viewport Errors

- Font Size

- Elements not organized correctly

There are other tests available to check a website’s mobile optimization. We can use them to find the errors and correct them.

Page Speed

Does Page Speed have any significant impact on SEO ranking? The answer is yes. Consider yourself as a customer, you search for something on a search engine and get multiple related results. Surely you are going to prefer those websites which are opening quickly. Most of us skip websites that are taking time to open. Slow page speed will leave a negative impression on the audience’s mind. They will eventually hesitate to revisit the website and look for a better option.

In 2018 google increased the importance of page speed with an update called “Speed”. When it comes to mobile devices it is more important than ever. According to Google research, the average website load time on mobile devices is more than 15 sec. Usually, we expect, any website to load on mobile devices within 3 seconds.

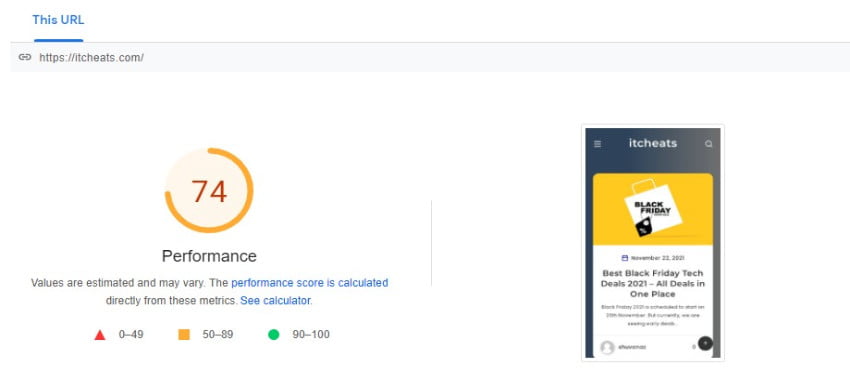

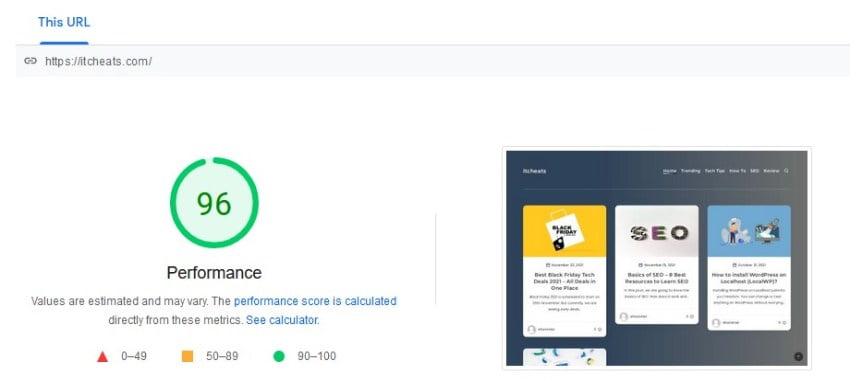

We can check page speed from Google’s PageSpeed Insights. We can find the performance of mobile and desktop devices from here. Some other webpage performance testing options are:

We can test our web pages and find the results from the above tools. There are some paid tools we can use also. With the help of these tools, we can evaluate our page errors and try to resolve those to improve the page speed.

To increase page speed, we can follow the below best practices:

-

- Compress CSS, HTML and JavaScript

- Optimize Images

- Optimize or minify CSS, HTML and JavaScript

- Use browser cache

- Control redirects

- USE CDN (content delivery network)

- Improve server response time

- Better Hosting

PageSpeed Insights – Mobile Performance

PageSpeed Insights – Desktop Performance

Duplicate Content

Availability of the same content in more than one website or page is called duplicate content. Same content in more than one URL may confuse the search engines about their placement in the search results. As a result, search engines might rank all the duplicate content lower.

It is always a best practice to reduce the number of duplicate content from your website. We can do that by following these steps.

- Frequently check search engine tools to find the duplicate indexed page.

- Careful sorting of redirects

- For WordPress No Index – Tag or Category

- Use Canonical Tags

Enabling AMP

Accelerated Mobile Pages (AMP) is created by Google to help websites load faster on mobile devices. Thousands of tech professionals collaborated and developed AMP. It removes non-essential codes. AMP removed videos, ads, animations and only includes mandatory content to show. AMP allows websites to load on mobile devices very quickly.

Implementing AMP on a website is hard if you do not have coding knowledge. It requires coding experience to create an AMP page. But for WordPress, it can be done with the help of plugins.

AMP Pros:

- Reduce loading time

- High speed, Rank High

- Easy for WordPress

AMP Cons:

- Need coding experience

- Fewer ads, limited revenue

- Design Limitation

Conclusion

Sometimes we develop a website and start to create content. Then we keep our whole focus on managing On-page and Off-page SEO to promote the website. We forget there is another equally important type of SEO, Technical SEO.

We need to remember that though most SEO experts always highlighted On-page and Off-page SEO, we can not ignore Technical SEO. It is the base for all other SEO procedures.

In this article, we tried to make a technical SEO checklist that we need to follow. It’s not possible to cover all the details of technical SEO in one article. Here we tried to include the key things we must do to make the website fundamentally strong after that we can concentrate on other types of SEO.

How Are You

Thanks for one’s marvelous posting! I certainly enjoyed reading it, you could be a great author.I will always bookmark your blog and may come back sometime soon. I want to encourage you to continue your great posts, have a nice day!

adcardz.com/moneytraffic

Best Regards

Hi

Thanks for finally writing about > %blog_title% < Loved it!

Best Regards